Exponential growth is the baseline

by Jason Crawford · February 21, 2021 · 7 min read

When we consider the question of “stagnation,” we are assuming an implicit answer to an underlying question: relative to what? What should we expect?

I have a simple answer: Our baseline expectation should be no less than exponential growth.

I will give both historical and theoretical reasons for this. Then, I will address concerns about the inputs to exponential growth: whether those too need to grow exponentially, and what problems that poses.

Exponentials in practice

My first reason for an exponential baseline is that we have plenty of historical examples of exponential growth sustained over long periods.

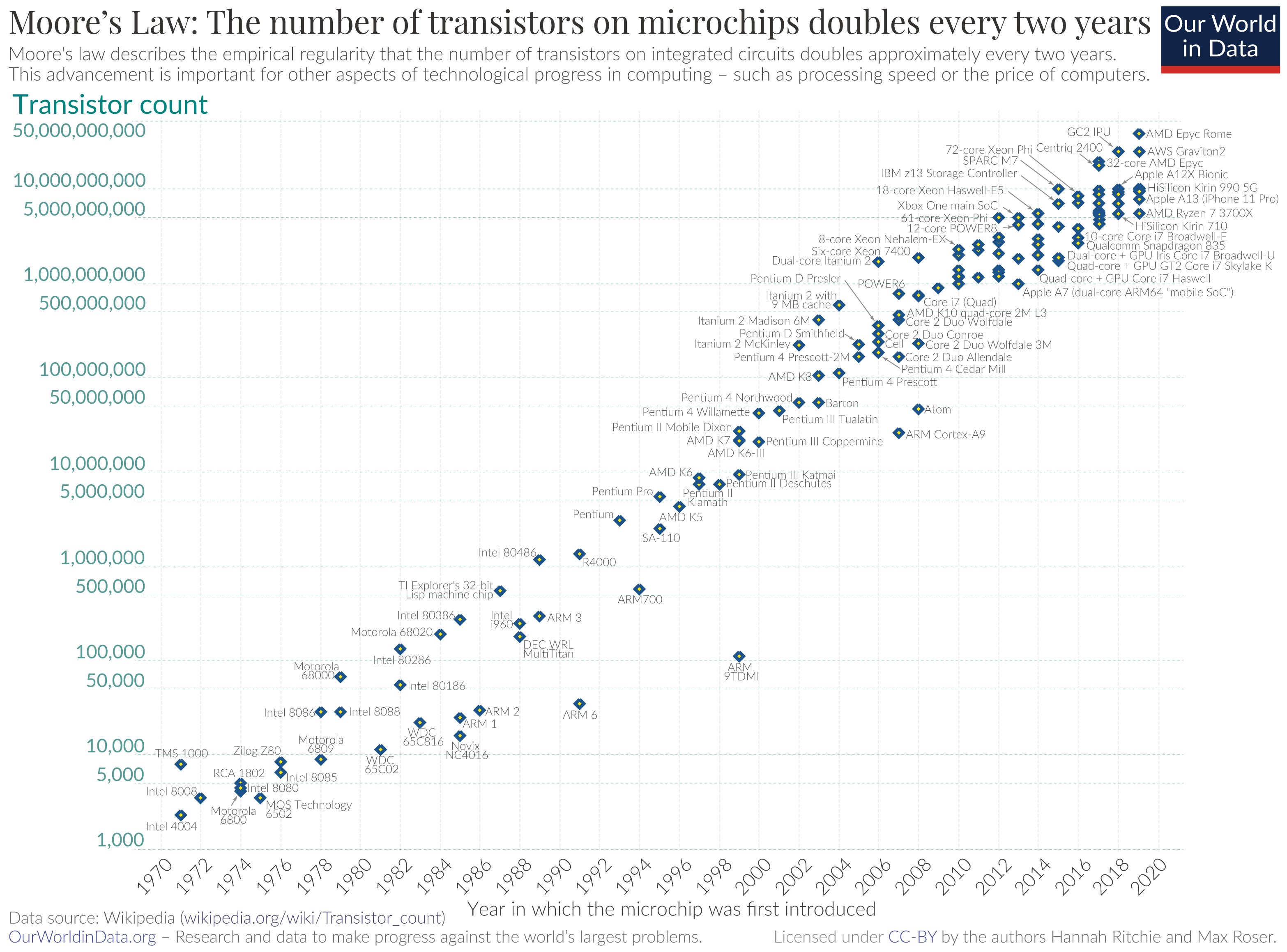

One of the most famous is Moore’s Law, the exponential growth in the number of transistors fitting in an integrated circuit:

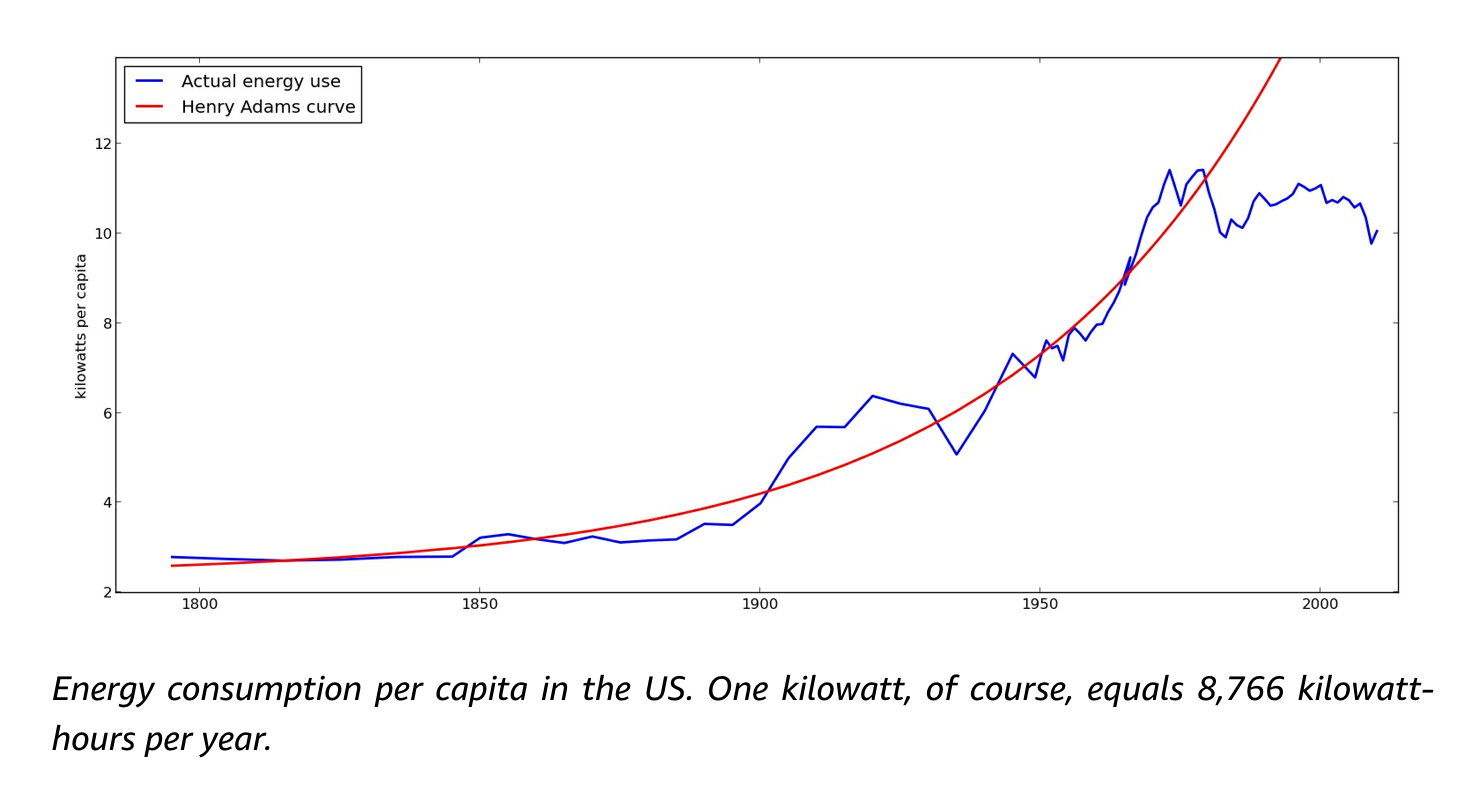

In a previous post, I discussed J. Storrs Hall’s concept of the Henry Adams Curve, an exponential increase in per-capita energy usage, sustained for a couple of centuries until it flatlined around 1970:

Here are some more charts of technological progress, many of which show exponential improvement curves, including in speed of transportation, energy density of batteries, and accuracy of clocks.

Each individual technology, over a long enough period of time, tends to follow not an exponential but an S-curve. It’s just the first part that looks exponential. But when we add up all the S-curves across the economy, we can get an exponential growth curve for the economy as a whole:

(And that’s only for advanced countries since the Industrial Revolution; if you look at trajectories that span both before and after industrialization, you get super-exponential curves. But what causes those and whether we should “expect” them is beyond the scope of this essay.)

This is why we talk about the rate of growth, and why it’s meaningful to compare the growth rate of the economy over a period of many years: the growth rate, expressed as a percentage, is constant for an exponential curve. It’s actually more meaningful to talk about “a 1% increase in GDP” than “a $200 billion increase”.

Exponentials in theory

My second reason for believing in exponentials is that there is a simple generative model to explain them.

Things that are growing exponentially are growing in proportion to their size. One way this can happen is if the thing—whether an economy, a business, or an organism—invests a constant proportion of itself into growth, and gets a constant return on that investment. For example, suppose an economy devotes 10% of its resources to growth each year, and each unit invested returns 1.3 units. That produces a constant 3% growth rate.

For this reason, we see exponential growth curves in places other than the economy: for instance, in the growth of populations (both human and animal).

Is “productivity” shrinking?

In the paper “Are Ideas Getting Harder to Find?”, Bloom, Jones, Van Reenen, and Webb (BJRW) define a metric of “research productivity” that is equal to the growth rate (of a specific technology, or the economy as a whole) divided by the number of researchers used to produce that growth. They find that to maintain a constant growth rate in various areas requires an exponential increase in researchers. For instance, the number of researchers needed to continue advancing Moore’s Law has increased by a factor of 18 since 1971. From this, they conclude that research productivity is declining.

But wait: this metric compares a rate of growth to an absolute level of investment. This decision is motivated by a model in which each researcher should be able to generate “ideas” at a constant rate, and each idea should improve output by a constant percent (indeed, “ideas” in this context are pretty much defined as “things that improve output by a constant percent”, and the authors admit that a more accurate title for the paper would be “Is exponential growth getting harder to achieve?”) So if I have 30 researchers, and each generates 1 idea a year, and each idea improves output 0.1%, that gives me a constant 3% growth.

In an incisive commentary on this paper, Scott Alexander points out that this model leads to conclusions that are counterintuitive bordering on nonsensical, if applied historically. If US GDP growth since 1930 had scaled with the number of researchers, it would now be 50% per year, and average annual income would be $50 million. If progress in life expectancy had scaled with biomedical researchers since that time, we’d all be immortal by now. Scott concludes that “constant progress in science in response to exponential increases in inputs ought to be our null hypothesis.” BJRW’s data just confirms this common-sense intuition.

In addition to Scott’s examples, consider the model of a startup company. Successful startups grow their customer base and revenue exponentially, at least in their early years. But no one expects this to happen without also hiring a lot more employees. No company measures “employee productivity” defined as percentage revenue growth divided by absolute number of employees, nor would they fret about that metric decreasing.

My conclusion: Yes, ideas are getting harder to find, according to these definitions. And I agree with Scott that this is probably an inherent challenge of “low-hanging fruit” getting picked early, leaving only harder problems. In fact, if you accept that not all problems are equally hard to solve, it would be strange and disturbing if this weren’t the case: it would mean that early researchers were not optimizing at all for the best return on their investment.

It’s fairly easy to see how this applies within specific fields. To make progress in infectious disease mortality in the 1860s, all doctors had to do was wash their hands and sterilize their instruments. Today, we have to sequence genomes, print mRNA, and package it in lipid nanoparticles. To make progress in astronomy, Galileo just had to build a telescope; today we have to build LIGO.

I think it also applies to the economy as a whole. Progress in science and technology means an ever-expanding frontier of knowledge and ideas. If each researcher can only work on a constant “width” of that frontier, then we need an ever-expanding number of researchers just to keep pushing it forward at the same rate.

However, I don’t think this explains technological stagnation.

First, historically, this fact of declining research productivity has always been true as far as we know, even in times when economic growth was highest, such as the early 20th century. That is, progress does not seem to have historically been driven by means of maintaining research productivity at a constant level.

Second, in my (oversimplified) model of a growing economy, exponentially declining research productivity does not cause a growth slowdown, because it is balanced by exponentially increasing investment.

If you are worried by “exponentially increasing investment” because it sounds unattainable—it’s not! It’s perfectly attainable, because it’s derived from the exponential growth in outputs. All you have to do is reinvest a constant percentage of your output into R&D, and you can get exponential improvements in technology even with declining “research productivity.”

Population growth and economic growth

I’ve been vague so far about what “investment” consists of. It has multiple dimensions. One is material resources, such as labs and equipment, which can be measured in dollars. But another is talent.

If exponential economic growth requires, among other things, exponential investment in R&D, then that means not only an exponentially growing research budget, but also an exponentially growing number of researchers. And that in turn requires an exponentially growing population. (Otherwise, eventually, all of humanity would become researchers, and then researcher growth would slow down.)

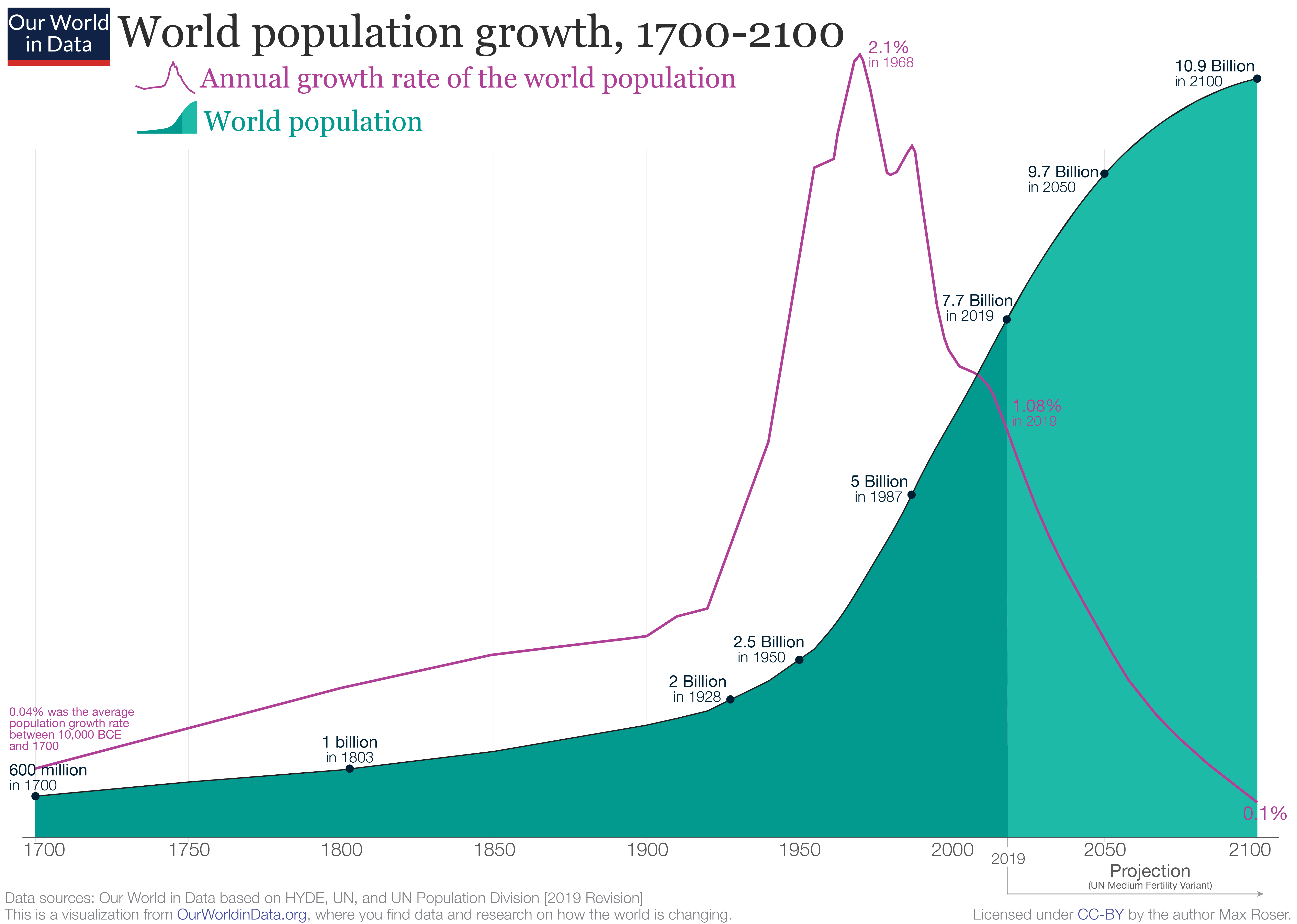

Of course, this too is no problem in theory. Any biological population can grow exponentially as long as there is a constant fertility rate above two offspring per female. And it was no problem in practice for humanity… up until the mid-20th century, at which point global fertility began dropping. In the last half-century, population growth has slowed, and is predicted to level off at around eleven billion by about 2100.

This is a relief from the standpoint of the challenges of population density and resource usage, but it’s deeply worrying from the standpoint of continued scientific, technological, and economic progress. If you take Julian Simon’s view that humanity itself is “the ultimate resource,” then a stagnant population is the ultimate resource crisis. Put another way, if the birth rate is a hundred million per year, then only a hundred one-in-a-million geniuses are born each year. To push forward the ever-expanding frontiers of science and technology (not to mention satisfying humanity’s needs for leadership, art, philosophy, etc.), we need all of them, and more each year.

The thesis that the population slowdown is the cause of a technological/economic slowdown is explored in another Scott Alexander post, “1960: The Year The Singularity Was Cancelled.” It’s interesting, but the data analysis involves a lot of stepping back and squinting. For me, the jury is still out.

If declining population growth is a cause of stagnation, how do we solve it? I don’t know. Lower fertility seems to be a consequence of wealth and education, and in that sense it’s a good thing. In fact, the idea that slower growth might have desirable causes, such as individual life preferences, was a theme of Dietrich Vollrath’s book Fully Grown.

But I’m not ready to give up on having both high rates of growth and maximally happy and fulfilling lives for individuals and families. Maybe technological breakthroughs will make it easier to bear and raise children. Maybe the fertility rate will pick up again if we begin settling other planets. Or maybe we can prevent the decline in research productivity through biologically enhanced intelligence, or (as Scott suggests) artificial intelligence.

Is exponential growth sustainable?

Another argument is that exponential growth is fundamentally unsustainable. This is a theme, for instance, of Vaclav Smil’s Growth: From Microorganisms to Megacities.

This argument can be made simply by pointing to resources: we will use up all fossil fuels, run out of usable land, etc. Or it can be made in a more sophisticated way by appeal to biological analogies. All biological populations grow exponentially at first, then level off as they hit resource limits. Herbivore populations run out of grass; carnivores run out of herbivores. As Charles Mann wrote in The Wizard and the Prophet, even bacteria hit the edges of their petri dish. Worse, if populations blow past their resource limits too fast, they might not experience a gentle leveling off, but a catastrophic crash.

In theory, yes, exponential growth of the economy can’t continue forever. But the hard limits are so far away that for almost all practical purposes today they can be treated as infinite. The limits only seem close when restricting one’s view to current technologies. But we have been consistently breaking technological limits for hundreds of years.

With continued progress, we are not limited by land area and fossil fuels. We are not even limited to planet Earth. We are limited only by the speed of light, the Hubble expansion constant, and the heat death of the universe. If we hit those limits, I’d say humanity had a pretty good run.

Thanks to Tyler Cowen, Ben Reinhardt, Phil Mohun, and Michael Goff for reviewing drafts of this post.